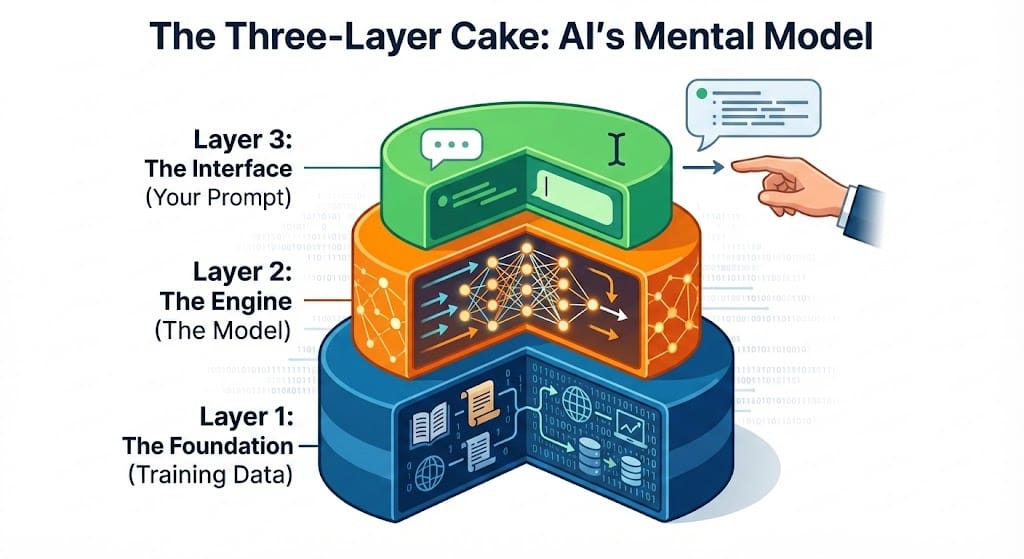

The Three-Layer Cake

How AI Actually Works

You don’t need to be a mechanic to drive a car. But you do need to know where the brakes are. AI is the same. You don’t need a PhD to use it. You just need a simple mental model.

Think of AI as a three-layer cake.

Layer 1: The Foundation (Training Data)

Before you type a word, AI has already done its homework. Massive amounts of it.

Modern models are trained on billions of pages. Books, articles, websites, and code. During this training, the model isn't memorizing facts. It’s learning patterns.

It learns how sentences are built. How arguments are structured. How experts talk.

Think of it like learning a language by living in a foreign country. You don’t memorize grammar rules. You develop an intuition for what "sounds right."

What this means for you: The AI has "read" almost everything. Medical journals, legal briefs, and financial reports. This is its superpower. It knows the "shape" of professional knowledge, even if it hasn't lived it.

Layer 2: The Engine (The Model)

The model is the engine. It’s a mathematical system—billions of numbers working together.

Here is the most important thing to understand: AI does not search a database. It doesn't "look up" an answer like Google. Instead, it generates an answer. It builds a response one word at a time by predicting what should come next.

Think of it as a Predictive Speed-Reader. When you ask, "What are the risks in this contract?" the AI isn't finding a pre-written list. It’s looking at the patterns it learned in Layer 1. It’s asking itself: "Based on every contract I’ve ever 'read,' what does a risk summary usually look like?"

This is why AI is so flexible. It can write a poem, code a website, or analyze a legal brief. It isn't limited to a script.

But there’s a catch. Because it’s just predicting the next word, it can be confidently wrong. It’s predicting what a good answer looks like, not necessarily what is true.

What this means for you: AI is a "first draft" machine. It’s brilliant at starting the work, but it lacks a "truth filter." It gives you the speed; you provide the judgment.

Layer 3: The Interface (Your Prompt)

This is where you come in.

This is the only layer you control. The prompt you write—and the context you provide—shapes everything. The same model can give you a generic list or a brilliant strategy. It all depends on your input.

Think of it like briefing a new employee. If you just say, "write a report," you’ll get something useless. But if you give them a goal, a target audience, and a specific format, you get exactly what you need.

The model has all the capability. Your prompt is the key that unlocks it.

What this means for you: Prompting isn't "coding." It’s a management skill. Most frustrating AI moments aren't caused by the AI—they are caused by vague instructions. Learn to brief your partner, and the results will follow.

How does a GenAI model think vs. a human brain?

Imagine a GenAI model as a speed-reader who has read all the books of the world’s largest library of digital information. Now when you ask a question, the model ‘vaguely’ remembers all this knowledge and starts predicting the answer.

Now what happens if the model is asked a question about something that it hasn’t seen before? Well, as you can guess, it won’t be able to accurately generate a response, or it will start hallucinating. Hallucination means the AI model will start making up ‘stuff’ to complete the response. The ‘made up’ stuff will read very real and complete to you - but it will be entirely incorrect.

Example: I was reviewing this blog with Google Gemini - it gave me a well drafted detailed review - without reading my blog! I started implementing the suggestions to quickly realize that the model hasn’t even read my document.

GenAI models are powerful statistical representations of existing knowledge, but lack intuition and (often uncodified) judgement required in a personal or a business context.

There are some similarities between LLM learning and how human brain learns. Human brain also learns from physical and digital world through experiences.

However, LLMs do not capture many capabilities of an animal brain. For example: a newborn Zebra can start walking within minutes - that’s learning by evolution, not by observing. An LLM has no such learning algorithm.

The Bottom Line

AI is a prediction engine. It’s built on human knowledge and shaped by your instructions.

It doesn't "know" things like you do. It doesn't check facts. It doesn't have a gut feeling. But it can process and create at a speed no human can touch.

Here is the deal:

- The AI does the heavy lifting. It handles the data, the drafts, and the patterns.

- You provide the judgment. You give the context. You verify the facts. You make the final call.

That is the partnership. It’s not you vs. the machine. It’s you plus the machine.